Where Does Your Organization Stand on AI Maturity?

Basic Summary AI maturity is a measure of how well your organization can put AI to work in a structured, repeatable way. Most manufacturers and industrial businesses are somewhere in the early-to-middle stages: they’ve run a few pilots or are using AI tools in isolated pockets, but haven’t built the processes, data infrastructure, or internal culture to scale it. This article explains what the main maturity stages look like, what separates companies that get real business value from those that don’t, and how to think about your next steps without overcomplicating it. Who This Is For

Key Takeaways

|

A lot of manufacturers and industrial companies are in the same position right now. Leadership has heard enough about AI to know they should be paying attention. Maybe you’ve tried a few tools. Maybe someone on your team ran a pilot. But you’re not entirely sure how far along you actually are, what comes next, or what you’ve done so far amounts to anything meaningful.

That uncertainty has a name: it’s an AI maturity problem. Not a capability problem or a budget problem, though those can show up too. It’s a clarity problem. You can’t chart a course if you don’t know where you’re starting.

AI maturity is a way of assessing how well an organization can put AI to sustained, productive use. It’s not a measure of how much AI software you’ve purchased or how many tools your team has access to. It measures how well your people, processes, data, and strategy are aligned to consistently get value from AI.

The reason this matters for manufacturers and industrial businesses specifically is that the operational stakes are high. Downtime is expensive. Decisions made on bad data cost money. Custom software that doesn’t connect well with new AI tools creates friction that compounds. Knowing your organization’s AI technologies and maturity level helps you invest in the right things at the right time instead of chasing tools that aren’t built for where you are yet.

What AI Maturity Actually Measures

An AI maturity model is a structured framework that helps organizations identify which stage of AI development they’re in. Researchers and consulting firms have been building and refining these models for years. Most versions describe five to six stages, and while the labels differ somewhat, the underlying progression is consistent.

What’s being measured isn’t just technical capability. It covers data infrastructure, governance, skill levels across the organization, leadership alignment, how well AI is integrated into core business processes, and if the organization has a coherent AI strategy to guide investment. All of these dimensions feed into your overall maturity level, and they rarely advance at the same pace.

That last point matters a lot in practice. It’s common for a manufacturing business to have strong data infrastructure in one department (say, production monitoring) and almost nothing in another (quoting or customer service). Overall AI maturity is about the aggregate, and gaps in any critical area tend to limit what the organization can actually accomplish with AI, even when other areas are strong.

The goal of using a maturity model isn’t to assign yourself a score. It’s to surface where your gaps are so you can close them in the right sequence. Skipping steps is one of the most common reasons AI initiatives fail. Organizations try to run enterprise-wide AI transformation before they’ve sorted out their data quality problems, or they build sophisticated AI capabilities on top of custom software that wasn’t designed to integrate with anything. The foundation has to come first.

The Common Maturity Stages

While different frameworks use different terminology, most AI maturity models describe a progression through roughly five stages. Here’s how they typically look in practice, particularly for manufacturing and industrial businesses.

- The first stage is what most frameworks call Exploratory. Organizations here have limited AI adoption, if any. Individual team members may be using AI tools on their own, but there’s no coordinated strategy, no governance, and no shared understanding of what AI is supposed to accomplish for the business. Decisions about AI are largely opportunistic or reactive.

- The second stage is often described as Developing. At this level, the organization has run some AI pilots. There’s growing awareness of what AI can do, and leadership has started paying attention. But the work is still siloed. Different teams are trying different things without much coordination, data sharing is limited, and successes haven’t translated into repeatable processes. AI is interesting but inconsistent.

- The third stage is Structured. This is where things start to look more like a real capability. The organization has documented AI practices, a clearer AI strategy, and some formal governance. Data infrastructure has improved, and there’s intentional investment in skill building. AI initiatives are starting to connect to measurable business outcomes, not just interesting experiments. The organization knows what it’s trying to accomplish with AI and has a plan for getting there.

- The fourth stage is Integrated. Successful AI adoption is embedded in core business operations. It’s not a side project anymore. Decision makers have access to AI-generated insights as a normal part of how they work, and the organization regularly measures business impact. Responsible AI practices are in place. The AI roadmap connects clearly to the business strategy. At this level, the organization is getting consistent, repeatable value.

- The fifth stage is Transformative. Few organizations reach this level, and fewer maintain it. AI is continuously refined, integrated across all critical operations, and actively driving enterprise-wide transformation. AI systems learn and improve over time. The organization has deep AI capabilities and a culture that treats AI as a core strategic asset. For most manufacturers and industrial businesses, this is aspirational, not near-term. But it’s useful to know what the destination looks like.

Why Most Organizations Get Stuck in the Middle

The gap between the Developing and Structured stages is where most organizations stall. They’ve done pilots. They have some interest from leadership. But they can’t seem to turn that into consistent, scalable results.

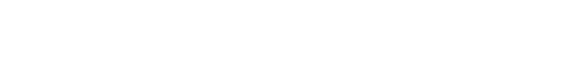

The most common culprit is data quality. AI systems are only as good as the data they run on. Many manufacturers have valuable operational data locked in legacy custom software systems that weren’t built to be integrated, inconsistently formatted records, or gaps in data collection that make it hard to train reliable models or generate trustworthy insights. Before you can scale AI, you have to be able to trust your data.

The second big obstacle is organizational alignment. AI maturity across an organization doesn’t happen by accident. It requires someone to own it and teams that understand how AI fits into their day-to-day work. When those things are missing, AI stays in the lab. Individual contributors may use AI tools on their own, but the organization never gets the coordinated effort needed to build real capability.

The third obstacle is the tendency to treat AI adoption as a technology purchase rather than a business transformation. Buying access to AI platforms is easy. Getting value out of them requires process change, training, integration with existing systems, and patience. Organizations that skip the organizational work and focus only on the technology often end up with expensive tools that don’t get used consistently.

The Role of Data Infrastructure in AI Maturity

For manufacturers and industrial businesses, data infrastructure deserves special attention. Your operations generate enormous amounts of data: production metrics, inventory levels, equipment performance, order history, and customer interactions. That data is a genuine competitive asset. But most of it lives in systems that weren’t designed with AI in mind.

Custom software built five or ten years ago typically stores data in ways that made sense for the specific problem it was solving at the time. Quoting tools, dealer portals, inventory systems, ERP software: all of these generate data, but the data is often difficult to access in a standardized way, difficult to combine across systems, and difficult to trust without serious cleanup.

Advancing your AI maturity often means investing in your data foundation before investing in AI capabilities. That might look like modernizing your custom software so it surfaces data in accessible formats, building integrations between systems so data flows where it needs to go, or establishing consistent data standards across the organization. None of that is glamorous work, but it’s the work that determines whether your AI investments pay off.

If your existing custom software is a barrier to AI progress, that’s worth naming directly. It’s one of the most common situations we see at NorthBuilt: manufacturers who want to move forward with AI initiatives but are constrained by legacy systems that weren’t built to connect with modern tools. Addressing that constraint is often the most impactful thing you can do to improve your organization’s AI maturity.

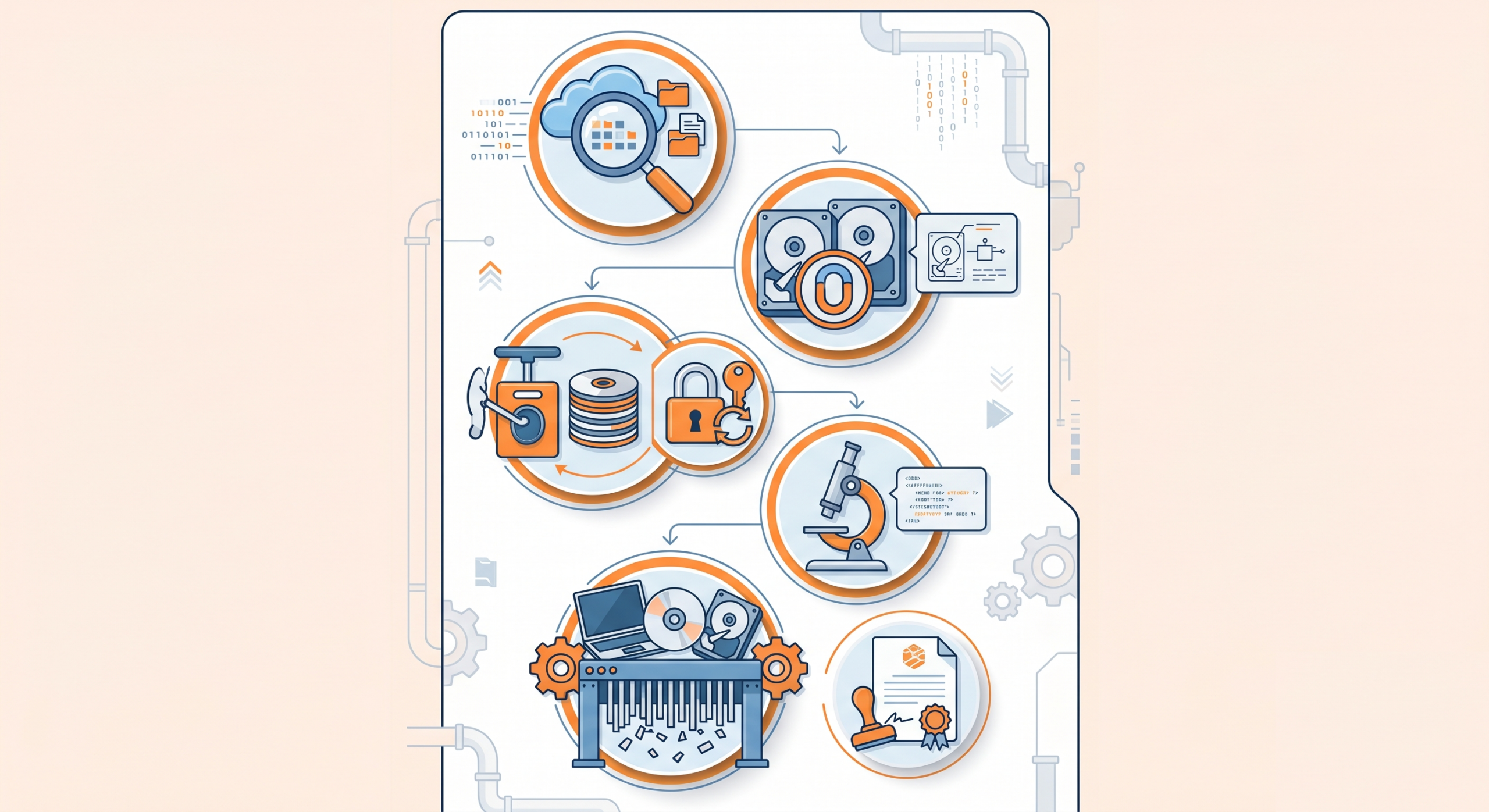

Responsible AI as a Maturity Requirement

Organizations that are serious about moving up the AI maturity curve can’t treat responsible AI as an afterthought. At higher maturity levels, AI systems are influencing decisions that have real consequences: production scheduling, customer pricing, quality control, and staffing. The governance around those systems matters.

Responsible AI means a few practical things. It means understanding what your AI systems are actually doing and why, not just accepting outputs as black boxes. It means having processes in place to catch errors, biases, or unexpected behavior before they cause damage. It means being transparent with your team and your customers about where AI is influencing decisions. And it means having someone who’s accountable when AI systems don’t perform as expected.

For manufacturers, responsible AI also means thinking carefully about how AI tools interact with your existing systems and processes. An AI recommendation that gets fed directly into a production workflow without human review is a different risk profile than one that informs a decision a manager still makes. Understanding those distinctions and building appropriate oversight into your processes is part of what maturing AI capabilities looks like.

Building a Practical AI Roadmap

Once you have a sense of where your organization sits on the maturity curve, the question becomes what to do about it. An AI roadmap is the mechanism that answers that question. Not a wish list of AI tools you’d like to have, but a structured plan that sequences your investments in a way that builds capability over time.

A practical AI roadmap starts with an honest assessment of your current capabilities. Where is your data? How good is it? What does your team actually know how to do with AI today? What AI tools or initiatives are already in use, even informally? What does leadership care about, and what business outcomes would they point to as proof that AI is working?

From that baseline, you can identify gaps and prioritize improvements. The goal is to find the investments that have the most impact given your current maturity level. Early-stage organizations usually need to focus on data infrastructure and one or two well-defined pilots with clear success metrics. Mid-stage organizations often need to focus on governance, integration, and broadening AI adoption beyond the teams that are already using it. Later-stage organizations are usually focused on optimization and expanding AI capabilities to new areas.

The important discipline is sequencing. Trying to build enterprise-wide AI transformation before your data infrastructure can support it wastes money and erodes confidence in AI initiatives broadly. Moving in stages, proving value at each level before expanding, is the approach that actually works.

What AI Maturity Means for Your Software

For most manufacturers and industrial businesses, AI maturity and software maturity are connected. The custom software you’re running today either enables or constrains what you can do with AI. If your systems generate clean, accessible data, connect to modern tools, and can be updated without major disruption, your AI maturity ceiling is higher. If your systems are aging, poorly documented, or difficult to integrate, that ceiling is lower than it needs to be.

This is one of the reasons NorthBuilt focuses on long-term software health rather than one-time projects. A quoting tool that was built a decade ago and has never been updated isn’t just a maintenance problem. It’s a strategic constraint on what your organization can do with AI and other modern technologies.

If you’re thinking seriously about AI maturity and realizing that your software infrastructure might be holding you back, that’s a useful recognition. The path forward doesn’t necessarily mean replacing everything. Often, it means a systematic approach to modernizing the most critical systems, building integrations that let data flow where it needs to go, and making sure someone is actively maintaining your software rather than just hoping it keeps running.

That’s exactly the kind of work we do at NorthBuilt. If you’d like to talk through where your organization stands and what a realistic path forward looks like, book a call with our team. We’re happy to think through it with you.

Chris Morbitzer

Chris Morbitzer is CEO and co-founder of NorthBuilt, a Minnesota-based software development partner that helps independent manufacturers, agricultural companies, and industrial services firms across the Midwest implement AI and build practical technology solutions.