What Is AI Infrastructure: Building Scalable, Secure Systems for Real-World AI

Quick Summary Who This Is For

Key Takeaways

|

AI usually enters the conversation because something isn’t working as well as it should. Some of the most common examples are quoting taking too long, reports lagging behind reality, and even teams spending hours rekeying data or chasing down information that should already be available. Leaders have heard that AI can help automate work or surface better insights, but the real question is whether their systems can support it.

AI doesn’t succeed because of a clever model alone. It only succeeds when it’s built on a solid foundation. That’s where AI infrastructure comes into play. AI infrastructure is the environment that supports AI in production.

What is AI Infrastructure?

AI infrastructure is the full set of hardware, software, technologies, processes, and controls that support AI systems across the stack: data ingestion, data processing, model training, training and inference, model deployment, and monitoring.

In practice, modern AI infrastructure is built to handle high-throughput pipelines and specialized compute needs while meeting production standards for security and stability.

Importance of AI Infrastructure

If you want AI that’s secure and cost-controlled, the foundation matters. Strong AI infrastructure is what turns a promising proof of concept into an operational capability your business can trust in everyday workflows.

Reliability

Reliable AI systems don’t surprise your team. With solid AI infrastructure, data pipelines are consistent, and deployments are controlled. Not only that, but models behave predictably in production. This reduces outages caused by broken integrations or rushed updates. For operations leaders, reliability means AI can support mission-critical processes without creating new points of failure.

High Performance

Performance is about more than speed in a demo. Strong AI infrastructure delivers fast response times and steady throughput under real workloads. Models can handle peak demand without slowing down, and data processing keeps up as volume grows. When performance is built into the foundation, teams can confidently use AI in day-to-day operations instead of limiting it to low-risk scenarios.

Scalability

AI initiatives rarely stay small. A scalable AI infrastructure allows you to add users, ingest more data, and support more complex models without rebuilding the system from scratch. As adoption grows across departments, the same foundation can support new AI applications while maintaining consistency and control. This makes expansion a planned step rather than a disruptive event.

Cost Control

Without the right foundation, AI costs can spiral out of control quickly. Strong AI infrastructure enables predictable spending by right-sizing resources and separating training and inference workloads. This visibility helps teams manage usage, avoid overprovisioning, and scale responsibly. Cost control turns AI from a financial risk into a sustainable investment.

Operational Impact for Leaders

When these values are in place, the impact is tangible. Decision-making improves because data processing is consistent and trusted. Repetitive AI tasks such as classification, routing, extraction, and summarization can be automated with confidence. Systems become more resilient, reducing dependence on a single developer or undocumented tribal knowledge.

Why the Foundation Matters

When AI adoption fails, it’s often due to gaps in the foundation. Brittle integrations break when upstream systems change. Inconsistent data handling produces conflicting outputs that undermine trust. Weak governance and data security controls introduce risks that slow or block deployment altogether.

Your AI investments only pay off when results are repeatable and scalable. AI infrastructure is what makes that possible, providing the stability needed to turn what works today into a capability you can rely on tomorrow.

Key Components of AI Infrastructure

There are six basic components of artificial intelligence infrastructure to consider. Together, they comprise the key and practical components of AI infrastructure that support production AI.

Computational Power

Compute is where most teams feel the pain first. Compute resources determine how fast you can train and serve models, as well as which types of models are practical.

- Traditional central processing units are excellent for preprocessing, orchestration, and many data tasks. CPUs often handle the “glue work” that keeps pipelines moving.

- Graphics processing units accelerate training and inference through parallelism. They’re common for deep learning, large language models, and many machine learning workloads.

- Tensor processing units can be a fit for tensor-heavy training and inference in certain ecosystems, especially when you’re optimizing for specific model families.

Why this matters: parallel processing capabilities are a major driver of training speed and system performance. When teams undersize compute, training slows, experiments get delayed, and production inference gets unstable. If the compute is oversized, costs balloon.

Specialized hardware makes sense when:

- You’re training frequently, on larger datasets

- You need low-latency inference under real user load

- You’re supporting multiple AI applications across teams

It can be wasteful when your primary need is occasional batch scoring or lightweight models that run well on CPUs.

Networking and Connectivity Frameworks

Networking is often ignored until it breaks, but for production AI infrastructure, it’s foundational.

- Low-latency networks matter for distributed training, real-time inference, and high-throughput pipelines.

- Multi-node workloads depend on consistent bandwidth, predictable latency, and reliable segmentation.

- Strong networking is essential for large-scale data processing when data and compute reside in different locations (hybrid environments are common in industrial companies).

If the network is unstable, the whole AI stack feels flaky. You’ll see timeouts, job failures, slow training, and unpredictable inference latency.

Data Handling and Storage Solutions

AI is only as strong as its data foundation. This component covers data storage, storage systems, and the practical patterns that keep data usable.

- Object storage is a common backbone for datasets, artifacts, logs, and model outputs. It scales well and is cost-effective for large volumes.

- Distributed file systems can be useful when workloads need POSIX-style access patterns or tight coupling with compute clusters.

- Traditional relational systems still matter for transactional systems and operational data, but they often aren’t the best place to store training datasets and model artifacts long-term.

The goal is consistent, governed access to data with scalable storage solutions that support growth. In production, you also need data integrity: confidence that the data is complete and hasn’t been altered unexpectedly. Without data integrity, model outputs become hard to trust, and the business stops using them.

Data Processing Frameworks

This is the layer that turns raw, messy reality into model-ready inputs.

- Data processing frameworks support transformation at scale: parsing, filtering, joining, aggregation, feature generation, and embedding creation.

- Your data pipelines should handle the full flow: data ingestion → cleaning → transformation → feature prep.

- Strong data processing includes validation and monitoring, not just transformations.

Many teams break here because pipelines grow organically. Manual exports creep in, and definitions drift. In the end, models are trained on data that doesn’t match what production sees.

Keep orchestration patterns clear and tool-agnostic. What matters is repeatability, observability, and consistent data handling for multiple teams and models.

Security and Compliance

If your AI systems touch customer, employee, financial, or operational data, security is not optional. A secure AI infrastructure is the difference between a pilot and something you can roll out.

Key practices include:

- Encryption at rest and in transit

- Network boundaries and segmentation

- Strong access control using role-based permissions

- Audit trails and governance workflows

- Clear policies for data retention and data protection

Security also affects adoption. If executives can’t trust the controls, AI initiatives stall. A practical security posture helps AI move forward without creating risk.

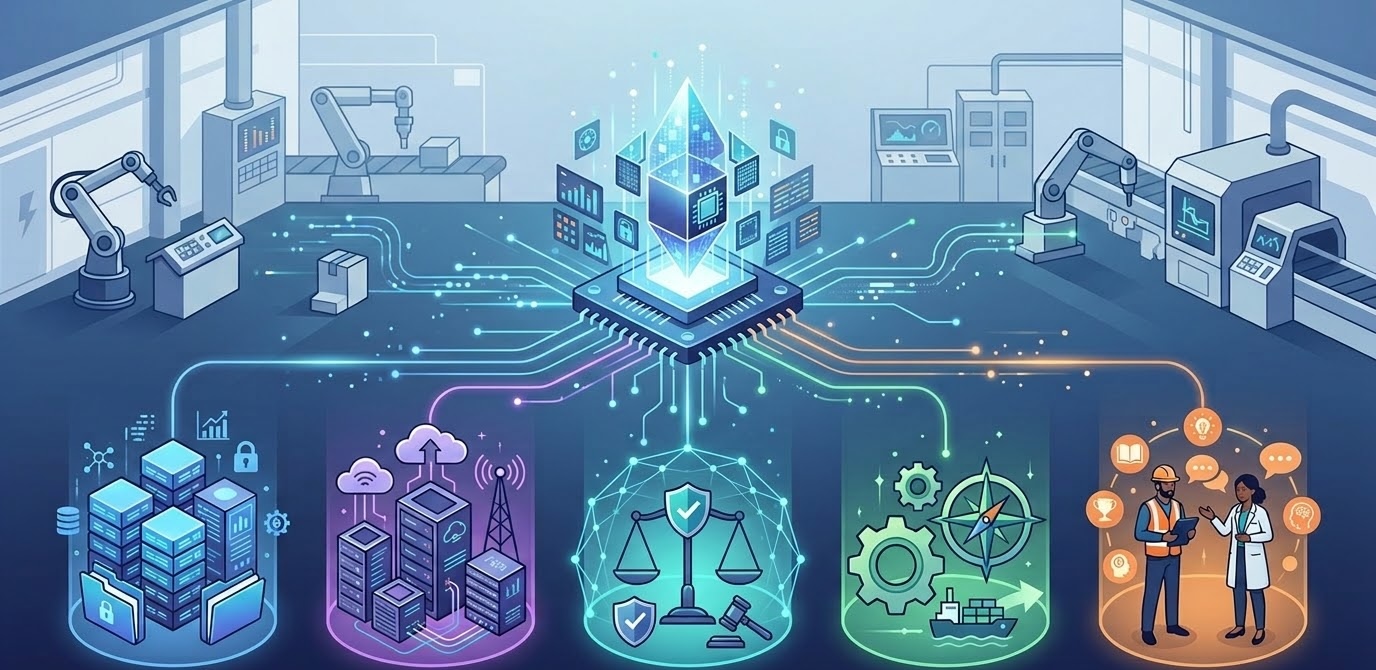

Machine Learning Operations (MLOps)

MLOps is where AI infrastructure work becomes sustainable. It’s the discipline that keeps models reliable after they go live.

Core capabilities include:

- CI/CD for machine learning workloads, so changes are tested and controlled

- Model registries and versioning so you can reproduce and roll back

- Monitoring for drift, latency, failures, and data anomalies

- Retraining workflows for training AI models on updated data

- Model deployment patterns for batch scoring, real-time APIs, or embedded applications

Without MLOps, teams get stuck manually babysitting models. With MLOps, AI becomes an operational capability you can rely on.

How does AI infrastructure work?

A good way to understand AI infrastructure is to walk through the lifecycle as a pipeline. This is where you see how data, models, and operations connect.

Data Flow Pipeline

Most production workflows follow this sequence:

- Data ingestion from ERPs, CRMs, portals, sensors, spreadsheets, and third parties

- Data handling: standardize, validate, deduplicate, and apply governance rules

- Data processing: transform into features, aggregates, embeddings, or training-ready tables

- Model training: train models on historical data, evaluate performance, store artifacts

But pipelines can break. It’s common for companies to get caught up in various situations.

- Manual exports and “one-off” cleanup steps: They become part of the process, and suddenly a critical workflow depends on actions that aren’t automated or documented.

- Inconsistent definitions across departments: When teams don’t agree on what a key field means, models trained on one version of the data behave unpredictably when fed another.

- Missing data lineage and unclear ownership: No one knows exactly where the data came from, how it was transformed, or who is responsible when something looks wrong.

- Quiet failures that only show up when model performance drops: Data can arrive late, incomplete, or subtly changed, and the system keeps running. The only signal is a gradual drop in model performance or decisions that no longer align with reality.

If your AI infrastructure doesn’t make data flows repeatable, your models won’t be repeatable either.

AI Models and Frameworks

Once data is prepared, models come into play.

- AI models are trained artifacts that make predictions, classifications, or generations.

- Machine learning is the broader approach of learning patterns from data rather than hard-coding rules.

- AI algorithms define how learning happens, but operational success depends on reproducibility and control.

Most teams rely on machine learning frameworks and software frameworks to standardize training and serving. Two common anchors are PyTorch and TensorFlow. The real value of these frameworks is that they enable consistent training loops, repeatable evaluation, portable model artifacts, and integration into deployment and monitoring workflows

What is the Difference Between AI Infrastructure and IT Infrastructure?

The core difference between traditional IT infrastructure and AI infrastructure is what they’re designed to support. Traditional IT systems are built for stability and predictability. They run core applications, handle transactional workloads, and scale in fairly steady, planned ways. AI infrastructure, on the other hand, is built for variability. It has to support experimentation, large data flows, changing models, and workloads that can spike without warning. AI doesn’t replace IT, but it does place new and very different demands on it.

Where traditional IT is mostly CPU-driven and application-centric, AI infrastructure blends CPUs with accelerators, shifting the focus to model training and real-time inference. Data processing moves from simple transactions to batch and streaming workflows. Deployments are increasingly involving models and datasets that evolve continuously. This changes how teams think about scalability, automation, and cost control, because training and inference behave very differently from standard application traffic.

Operationally, AI also requires greater ongoing oversight. Models must be evaluated over time and retrained as data changes. They also must be monitored for drift or performance degradation. Observability expands beyond logs and metrics to include data quality and model behavior. That’s why strong AI infrastructure is not just an add-on to existing systems. It’s a broader operational capability that allows AI to run safely and reliably alongside the systems that already keep the business running.

Steps to Build Strong AI Infrastructure

If you’re leading operations, you want a realistic roadmap for lean teams. Building AI infrastructure works best as a staged approach that aligns technical decisions with business outcomes.

Step 1. Define Business Objectives

Start with the workflow, not the model.

- Identify AI applications tied to measurable outcomes: fewer errors, faster quoting, better forecasting, reduced manual routing

- Choose 1–3 priority AI projects worth piloting

- Define success metrics upfront (time saved, accuracy, turnaround time, adoption)

Step 2. Assess Data Readiness

Most AI delays are data delays.

- Run a data management reality check: quality, ownership, access, and gaps

- Establish data lineage and definitions early so teams don’t build on conflicting assumptions

- Identify integration needs across existing systems and operational tools

Step 3. Choose Compute and Storage Architecture

Your architecture should align with your workload, constraints, and security posture.

- Decide where training and inference should live: on-prem, cloud services, or hybrid

- Select compute clusters and storage solutions based on model size, frequency, and latency needs

- Plan for growth with clear scaling patterns and cost controls

This is where choices about storage systems, object storage, and compute sizing become concrete.

Step 4. Build Data Pipelines

Pipelines are where reliability is won.

- Design data pipelines that are repeatable, monitored, and documented

- Automate ingestion and transformation to reduce spreadsheets and manual steps

- Make pipelines resilient with retries, validation, and alerts

Step 5. Implement MLOps

This is how you keep AI stable after launch.

- CI/CD for training and inference workflows

- Model registry and deployment strategies with rollback plans

- Monitoring for drift, latency, performance, data quality, and uptime

- Clear processes for training AI models as data changes

Step 6. Secure and Govern the Environment

Security should be part of the architecture, not a late-stage blocker.

- Encryption, RBAC, audit logging

- Policy-based access to datasets and model endpoints

- Long-term data protection built into operational workflows

When internal teams are lean, a partner can accelerate safe implementation without sacrificing maintainability. A good partner helps you make smart choices early to reduce rework and keep the stack stable long after launch. NorthBuilt can be your partner as you build your AI software.

If your team is evaluating architecture options, this is often the point where an outside perspective pays off. NorthBuilt’s Cloud Migration Consulting, Database Development, and Custom Integrations services can help you choose an approach that fits your current systems and your long-term roadmap.

What are common challenges in building AI infrastructure?

Most teams don’t fail because they lack ambition. They fail because the practical obstacles stack up. Here are the most common challenges, plus mitigation strategies that align with real-world operations.

Talent gaps and complexity

- Challenge: AI infrastructure spans data engineering, ML, security, networking, and operations.

- Mitigation: Start with a narrow use case. Build reusable patterns. Use managed services selectively to reduce complexity while maintaining clear ownership.

Controlling infrastructure costs

- Challenge: Training costs can spike fast. Inference costs can creep over time.

- Mitigation: Right-size aggressively. Separate training vs inference budgets. Use scheduling, quotas, tagging, and usage reviews to keep costs visible and controlled.

Managing machine learning models at scale

- Challenge: Multiple models, versions, and datasets lead to chaos.

- Mitigation: Use registries, standardized deployment, and clear ownership. Set retraining schedules and quality gates before rollout.

Vendor lock-in risks

- Challenge: Over-reliance on a single provider or proprietary layer can limit flexibility.

- Mitigation: Favor modular design, open interfaces, and portability planning. Document assumptions and exit paths early.

Maintaining system performance

- Challenge: As AI usage grows, latency and throughput issues surface.

- Mitigation: Performance testing, caching, capacity planning, and strong observability across data, model, and application layers.

Scaling AI applications

- Challenge: A model that works for one team can fail under company-wide load.

- Mitigation: Stage rollouts, define SLOs/SLAs, load test early, monitor continuously.

Integrating with existing systems

- Challenge: Legacy apps and inconsistent data definitions create brittle integrations.

- Mitigation: Build an integration layer with APIs or events. Modernize incrementally. Treat integration and data contracts as first-class work.

This is where ongoing maintenance and support become a competitive advantage. AI is an operational system that needs care.

AI Infrastructure That’s NorthBuilt to Last

AI doesn’t become real value until it runs reliably inside your day-to-day systems. If your team is lean and your software is mission-critical, the right move usually isn’t hiring a giant AI staff. It’s building AI infrastructure that fits your security needs and existing operations tools, then keeping it healthy over time.

NorthBuilt helps independent businesses do exactly that. We support the full path from data pipelines and data processing to integrations, cloud architecture, database work, and ongoing maintenance and support that prevents costly downtime. If you’re evaluating AI or struggling to scale it beyond a pilot, book a call with us today. We’ll help you prioritize the right AI applications, assess readiness, and map the shortest path to a secure, production-ready AI stack that won’t leave you hanging.

What does AI infrastructure include?

AI infrastructure includes compute, networking, data storage, data pipelines, data processing frameworks, security controls, and MLOps capabilities for model deployment and monitoring. It also includes the operational practices that keep AI systems stable: observability, access governance, retraining workflows, and incident response. If you plan to support multiple AI applications, your infrastructure should be designed as a shared platform, not a one-off setup.

How much does AI infrastructure cost?

Cost depends on workload type, model size, and how often you train. Training can be the highest variable cost, especially for large language models or heavy computer vision workloads. Inference costs depend on usage volume and latency requirements. The best cost strategy is to separate training and inference, right-size compute, monitor usage, and build pipelines that reduce repeated processing. Strong AI infrastructure is often cheaper long-term than repeated rebuilds.

Can small and mid-sized businesses build AI infrastructure?

Yes. The goal is not to build an enterprise-scale platform on day one. Start with one or two AI projects tied to clear business value, build a reliable data foundation, and expand the stack incrementally. Many mid-sized teams succeed by combining internal ownership with targeted partner support for architecture, security, and integration. The key is designing an AI infrastructure that fits your team size and operational reality.

What hardware is required for AI workloads?

Some workloads run well on CPUs, especially lightweight predictive models or batch scoring. Deep learning, real-time inference, and many generative AI use cases often benefit from accelerators like GPUs and sometimes TPUs. Your compute choice should follow workload needs, not trends. A common approach is CPU for orchestration and preprocessing, GPU for training and serving when needed, and scalable storage that keeps data close to compute.

What is generative AI infrastructure?

Generative AI infrastructure is a subset of AI infrastructure designed to support generative models, often including large language models. It typically requires fast storage for embeddings and retrieval, robust serving for low-latency responses, strong access controls, and monitoring to manage cost and output quality. Many production teams also use retrieval patterns to ground responses in internal data, which increases the importance of clean pipelines and governed data access.

How long does it take to deploy AI infrastructure?

It depends on your starting point. If data pipelines are weak and systems are fragmented, the first phase is usually data readiness and integration. A focused pilot can move quickly when the use case is narrow and data access is clean. Production readiness takes longer because it includes security, monitoring, deployment controls, and operational processes. The best approach is staged: pilot, prove value, then scale the platform as adoption grows.

Do you need the cloud for AI infrastructure?

No, but the cloud can be helpful for elasticity, managed services, and fast iteration. On-prem can make sense for sensitive data, strict compliance needs, or existing investment in hardware. Hybrid patterns are common in industrial environments. The right answer depends on data sensitivity, latency requirements, and operational support capacity. A good AI infrastructure plan is clear about where training and inference live, and why.

How do you maintain AI systems long-term?

You maintain AI systems the way you maintain other critical systems: monitoring, incident response, change control, and continuous improvement. For AI, that also includes data quality checks, drift monitoring, retraining schedules, and version control for datasets and models. Long-term success depends on MLOps discipline and clear ownership. If you don’t have internal bandwidth, this is where a reliable partner and a support plan make a measurable difference.

Chris Morbitzer

Chris Morbitzer is CEO and co-founder of NorthBuilt, a Minnesota-based software development partner that helps independent manufacturers, agricultural companies, and industrial services firms across the Midwest implement AI and build practical technology solutions.