Data Cleaning: How to Turn Messy Data Into Reliable Insights for Manufacturing Operations

Basic Summary Data cleaning is the process of identifying and fixing errors, inconsistencies, and gaps in your data so you can trust it. For manufacturers and industrial service companies, clean data is essential for accurate reporting, stable systems, and confident decision-making. Who This Is For

Key Takeaways

|

Manufacturing and industrial service companies rely on data every day. Production numbers, inventory counts, service tickets, pricing, customer records, and financial reports all flow through software systems. When that data is accurate, leaders can make informed decisions. When it is inconsistent, it creates confusion and can sometimes lead to costly mistakes.

Data cleaning, sometimes called data cleansing or data scrubbing, is the foundation of reliable systems. It is the disciplined process of identifying and correcting errors in raw data so it can be used for accurate analysis and operational reporting. For companies that depend on ERP systems and multiple data sets across departments, data cleaning is not optional. It is a core part of maintaining data quality and protecting the value of your technology investment.

What Is Data Cleaning?

Data cleaning is the process of reviewing raw data and correcting issues that reduce its accuracy and usefulness. These issues often include missing values, duplicate entries, structural errors, invalid data, inconsistent formats, and unwanted observations.

In practical terms, data cleaning might mean removing duplicate rows from a customer table, correcting typographical errors in part numbers, standardizing date formats across multiple columns, or handling null values that arise from incomplete data collection. It may also involve parsing large text fields into structured components, such as separating a full name into first and last names or breaking an address into defined fields.

The goal is simple: Transform messy data into clean data that supports accurate analysis and high-quality machine learning models. Without this step, even advanced analytics tools can produce false conclusions.

Why Data Quality Matters in Manufacturing

Manufacturers and industrial service businesses often operate with multiple data sources. You may have one system for quoting and pricing and another for financial reporting. Over time, data flows between these systems through imports and exports, as well as integrations. Each transfer introduces the risk of inconsistent or structural data errors.

Common data quality issues in this environment include duplicate data from repeated import or incomplete data from manual entry. When these problems accumulate, leaders start seeing conflicting reports. Operations teams lose confidence in dashboards. Finance teams spend hours reconciling numbers.

Poor data quality leads to poor decisions. If production counts deviate significantly from reality due to duplicate entries or missing data, managers may adjust staffing or purchasing incorrectly. If inventory data contains unwanted outliers or extreme values that were never validated, replenishment planning suffers. In regulated environments, inaccurate records can create compliance risks.

High-quality data supports reliable data analysis and confident planning. Clean data is about protecting margins and maintaining trust across multiple departments.

Common Data Quality Issues

Most organizations face a predictable set of challenges when dealing with messy data.

Missing values and missing data are among the most common problems. Fields may contain null values because of incomplete data collection or manual entry errors. Deciding how to handle missing data requires context. Sometimes it makes sense to drop records with missing values. In other cases, statistical methods such as imputation are appropriate.

Duplicate data and duplicate rows are another frequent issue. Multiple entries for the same customer, order, or part number can skew analysis and inflate counts. Removing duplicate entries is a basic step in any data cleaning process.

Inconsistent data often appears in different formats. A date format may vary across systems. Phone numbers might be stored with or without country codes. Categorical data may contain slightly different spellings of the same value, creating multiple unique values where only one should exist.

Structural errors include syntax errors or improperly parsed information. For example, a single text field that combines city, state, and ZIP code limits analysis and reporting. Parsing that field into a standard format improves data accuracy and reporting flexibility.

Outliers and extreme values can also distort analysis. Some outliers represent true anomalies. Others are simple entry errors. Detecting outliers through visual inspection or review of standard deviation can help teams distinguish between valid data points and unwanted outliers.

The Basic Steps in Data Cleaning Processes

While every organization is different, effective data cleaning processes follow a similar structure.

The first step is inspection. This involves reviewing the original data and understanding its structure. Analysts examine multiple data sets, look for missing values, detect outliers, and assess overall data quality. This phase often includes simple checks in Microsoft Excel or more advanced exploration using tools like Python import pandas.

Next comes removing duplicate data. Identifying duplicate rows and redundant data ensures that each data point represents a single, accurate record. Many data cleaning tools include built-in features to remove duplicates and highlight duplicate entries.

Handling missing data follows. Teams decide whether to drop records or fill missing values with a specified value. The decision should align with defined business rules and the intended use of the data.

Standardization and data transformation come next. This may include converting fields to a standard format and ensuring consistent units of measure. Data transformation ensures that different formats across multiple columns and systems are unified.

Correcting structural errors is another critical step. This includes fixing typographical errors and restructuring fields that do not align with business logic. Parsing and restructuring data improve long-term data management.

Finally, data validation confirms that the cleaned data meets defined business rules. This step ensures that invalid data has been addressed and the final data sets support accurate analysis.

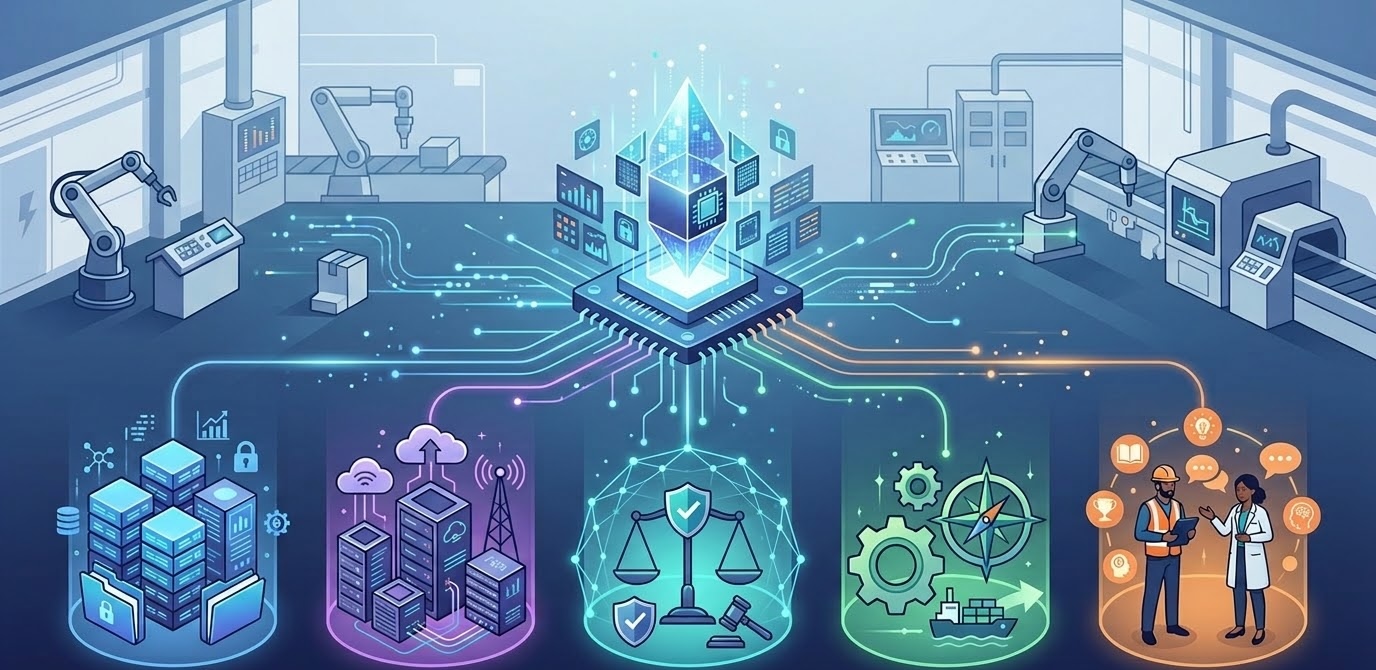

Tools That Support Data Cleaning

Data cleaning tools range from simple to highly specialized. Many manufacturing companies begin with Microsoft Excel for small data sets. Features such as find and replace, remove duplicates, and basic filtering can resolve many common data quality issues.

For more complex needs, programming languages such as Python provide scalable solutions. Using libraries like pandas allows teams to automate repetitive tasks and apply consistent rules across large data sets. With Python import pandas, teams can detect missing values and standardize formats efficiently.

Specialized data cleansing tools and platforms, including SQL-based solutions and data preparation software, offer additional capabilities for validation and integration. The right tool depends on the size of your data and the internal expertise available.

The Benefits of Data Cleaning

The benefits of data cleaning extend beyond tidy records. Clean data improves data accuracy and strengthens confidence across the organization.

For operational teams, clean data enables accurate performance tracking and better resource planning. For finance, it ensures that reporting reflects reality and reduces time spent reconciling discrepancies. For leadership, it reduces the risk of false conclusions based on inconsistent or incomplete data.

Clean data also improves machine learning models. Machine learning relies on high-quality data. If training data contains invalid data or inconsistent formats, the model will learn from flawed patterns. Investing in data cleansing improves model performance and reduces risk.

Over time, structured data cleaning processes also reduce manual effort. Instead of repeatedly fixing the same issues, organizations build validation rules and automated checks into their systems. This shift from reactive correction to proactive data management supports long-term efficiency.

Ongoing Data Management, Not a One-Time Project

Many organizations treat data cleaning as a one-time cleanup. They run a project to fix messy data, then move on. Over time, new data introduces the same problems again.

Sustainable data quality requires ongoing data management. Defined business rules and regular audits prevent common data quality issues from resurfacing. This approach aligns with how we think about software maintenance more broadly. Systems require ongoing care.

For manufacturers running custom applications and reporting dashboards, data cleaning should be built into the operational rhythm. New data should be validated. External sources should be checked for consistent formats. Imports should follow standard rules. Over time, this discipline reduces risk and improves trust.

Bringing Structure to Your Data Systems

If your team spends too much time reconciling reports or correcting repetitive errors, it may be time to revisit your data cleaning processes. The right structure can improve data quality and reduce friction across multiple departments.

At NorthBuilt, we work with independent manufacturers and industrial service companies to keep custom software systems healthy and reliable. That includes improving workflows so they support clean data from the start. Through a structured discovery process and ongoing support, we help teams move from reactive fixes to proactive data management.

If you want to avoid poor decisions caused by messy data and build a foundation of reliable data for growth, it starts with understanding how your systems handle information today. You can learn more about how we approach long-term support and modernization through our process, or schedule a conversation to discuss your specific challenges

Clean data is a business asset. When your systems consistently produce high-quality data, your leadership team can move forward with confidence.

Chris Morbitzer

Chris Morbitzer is CEO and co-founder of NorthBuilt, a Minnesota-based software development partner that helps independent manufacturers, agricultural companies, and industrial services firms across the Midwest implement AI and build practical technology solutions.