How to Develop AI Software: Building AI-Powered Solutions That Last

Basic Summary This guide explains how mid-sized businesses can develop AI software that delivers real operational value. It walks through a practical, end-to-end approach for building AI-powered solutions that integrate with existing systems, reduce manual work, and remain reliable over time. Who This Is For

Key Takeaways

|

AI has officially moved past the “nice demo” phase. In manufacturing, agriculture, staffing, and industrial services, AI-powered systems are showing up to automate processes that used to take hours, enabling newfound speed in day-to-day operations.

That’s why more businesses are looking to develop AI software that fits their current environment instead of buying another tool that doesn’t match their workflow. Companies today aim to make fewer high-impact improvements to remove friction.

A common misconception right now is that artificial intelligence is only practical for big tech or venture-backed startups. In reality, most successful AI systems are built the same way reliable business software is built: clear requirements, solid software development, clean integrations, and ongoing support.

AI Software Development

AI is transforming the way businesses approach software development by enabling the creation of intelligent systems that can perform tasks traditionally handled by humans. At the heart of this transformation are AI models, which power everything from writing code and understanding natural language to automating routine tasks and recognizing images. The process of AI software development requires a strategic approach to planning, execution, and rigorous testing to ensure the final AI software meets business needs and delivers reliable results.

When AI is applied to the right operational problems, it creates practical advantages:

- Automating routine tasks and repetitive tasks like document classification, data entry, ticket routing, and report drafting

- Improving data accuracy by catching inconsistencies early and reducing human error

- Supporting real-time decision making with alerts, recommendations, or prioritization

- Adding predictive analytics to forecasting, maintenance planning, staffing, or demand signals

- Helping teams enhance productivity without adding headcount

- Ensuring solutions are designed with the end user in mind, so that AI software is user-centric and meets the needs of the final consumers

This is where AI-powered solutions shine: consistently handling high-volume work and assisting people at the right moments.

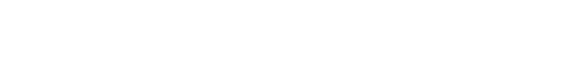

Typical phases in the AI development lifecycle

A practical AI development roadmap follows familiar software development patterns, with additional work around data and models:

- Discovery and requirements

- Data collection and preparation

- Model selection and training

- Integration with existing systems

- Deployment, monitoring, and continuous improvement

But the road doesn’t stop there. The development lifecycle continues after launch with testing and refining as data and processes change and model performance drifts over time.

Roadmap to Develop AI Software

Step 1: Defining the Operational Problem

Teams often begin with a vague goal like “use AI to improve operations.” That sounds reasonable, until you consider the many ways it could be applied. With ill-defined parameters comes scope creep and mismatched expectations. Teams that succeed start with a narrow and specific goal. They focus on one real operational pain point and define it precisely.

Good AI use cases can sound like:

- “Classify incoming service requests and route them to the right queue.”

- “Extract fields from PDF invoices and validate them against purchase orders.”

- “Recommend the next best action for overdue orders based on status and history.”

The common thread is clarity. Each example describes a specific task with a measurable outcome. AI works best when scoped to well-defined actions like summarizing, classifying, extracting, predicting, or detecting anomalies. If the requirement is fuzzy, there’s no reliable way to evaluate success.

The first step is also where teams must consider user experience. The most effective AI in operations is usually subtle: a suggested value, a flagged exception, a ranked list, or a draft response. To make that work, teams must be explicit about:

- Who sees the AI output

- When they see it in their workflow

- What action they can take

- What happens when they disagree with the recommendation

Leaving these decisions vague erodes trust quickly. Involving business analysts early helps prevent that. They translate real, day-to-day work into requirements that engineers can build and maintain, keeping the project grounded in outcomes instead of novelty.

Step 2: Data Collection and Labeling

Once the problem is defined, reality usually shows up in the data.

Most organizations have plenty of data, but it’s rarely clean and organized the way AI models expect. ERP systems, CRMs, purchasing platforms, inventory tools, quality records, production logs, and support tickets all contain useful signals, but they often reflect years of workarounds and manual processes.

In some cases, external data can add value, such as supplier updates or public market information. When it does, it needs to be evaluated carefully for reliability, licensing, and long-term availability.

Data handling is also where risk increases. If your systems contain customer pricing, employee information, or regulated records, those constraints have to be designed into the solution from the start. “Move fast” isn’t a strategy when sensitive data is involved.

Many AI models require labeled examples, which introduces another common challenge. Labels don’t need to be perfect, but they do need to be consistent. Clear labeling rules, sampling strategies, and periodic quality checks matter more than volume alone.

In manufacturing and industrial environments, teams often discover gaps like:

- Important fields buried in free-text notes

- Inconsistent naming conventions across systems

- Missing timestamps or identifiers

- Process steps that were never captured digitally

These gaps don’t mean AI is off the table. But the development process must include data cleanup and process alignment as first-class work, not an afterthought.

Step 3: Selecting AI Models and Architectures

Model selection is where teams can lose significant time if decisions are driven by hype instead of constraints.

There are generally three paths:

- Pretrained models – Fast to start and work well for many common tasks, especially for natural language use cases

- Fine-tuned models – Improve accuracy and consistency for a specific domain when you have enough high-quality examples

- Custom models – The best option only when there are strict constraints or a highly specialized task involved.

Many teams assume more customization automatically means better results. In practice, smaller, well-matched solutions are often easier to deploy, monitor, and maintain.

Generative AI deserves special attention. It’s a strong fit for language-heavy or draft-based tasks like summarizing work orders, drafting customer updates, extracting structure from messy text, or building internal search and Q&A tools. At the same time, generative systems require guardrails because they can produce incorrect or inconsistent outputs. Verification, constraints, and logging are not optional.

The “right model” is the one that meets your accuracy, latency, cost, and risk requirements in production. Sometimes a simple classifier outperforms a larger model simply because it’s faster, cheaper, and easier to audit.

Step 4: Prototype Model Training

Before committing to full implementation, teams reduce risk by validating feasibility early.

This usually means running small-scale experiments on a narrow slice of real data with clearly defined metrics. You want to confirm that it’s good enough to support the workflow reliably.

This is where disciplined AI development matters. Data scientists and AI developers establish baselines, test assumptions, and surface failure modes early. Success criteria should be explicit, such as accuracy thresholds, acceptance rates, or required levels of human review.

If a model can’t meet baseline targets without excessive complexity, that’s a signal to pause. Sometimes the right fix is better data capture or a workflow adjustment, not a more complex model.

The 30% rule for AI

The “30% rule” is a practical planning concept: assume that roughly 30% of the effort will go to experimentation, iteration, and refinement rather than a straight-line build. In AI work, you’re validating data readiness, testing model behavior, adjusting the workflow, and tightening guardrails. If you don’t plan for that learning loop, timelines and budgets get stressed.

Step 5: Code Generation and Integration

This is where AI turns into real software. Modern AI tools can speed up development through code generation and code completion, especially for common patterns, integrations, and test scaffolding. Used properly, they help developers focus on higher-value work instead of boilerplate.

The real complexity lies in integration. Models need to work inside existing applications with proper authentication, permissions, retries, logging, error handling, and fallbacks. That’s classic software development applied to AI.

Most teams wrap models behind services or APIs so applications can call them consistently, store results with context, and swap implementations later without major rewrites. Internal code snippets should always be paired with documentation so future engineers can maintain the system confidently.

AI-assisted coding still requires review. Enforcing standards protects code quality and reduces security vulnerabilities, which becomes more important as AI-driven logic moves closer to core operations.

Step 6: Develop Non-AI Software Components

Despite the focus on AI, most of the work is still traditional product development.

AI features depend on solid foundations: portals, dashboards, backend services, databases, integrations, and usable interfaces. Web development, workflow orchestration, and system integration often consume more effort than the model itself.

Programming language choices typically follow existing systems. Python is common for data-heavy services, while JavaScript or C#/.NET often power user-facing and enterprise layers. In some environments, supporting multiple human languages is also necessary for customer or partner-facing tools, and planning for that early prevents costly rework.

Step 7: Deploy AI-Powered Solutions

Successful teams use pilot deployments and staged rollouts: one team, one workflow, clear rollback options, and measurable before-and-after comparisons. This limits risk and builds confidence.

Production introduces live data, real users, and edge cases that didn’t appear in testing. Clear solution architecture helps define system boundaries, data flow, and failure handling so AI enhances operations instead of destabilizing them.

Ongoing measurement matters. Tracking accuracy, response times, override rates, and error patterns is how teams prevent AI-powered features from quietly degrading over time.

Step 8: Continuous Improvement and Maintenance

Long-term value comes after launch. Data changes, processes evolve, and vendors update formats. Models need retraining, prompts need refinement, and performance needs monitoring. Even strong systems degrade without attention.

AI outputs should be monitored like any other production output, with clear visibility into quality, cost, and behavior. Testing, including test case generation, helps identify bugs early before they disrupt operations.

This is why ongoing support matters more than launch day. Without maintenance, AI features become fragile and outdated. With it, they become a durable part of how the business runs.

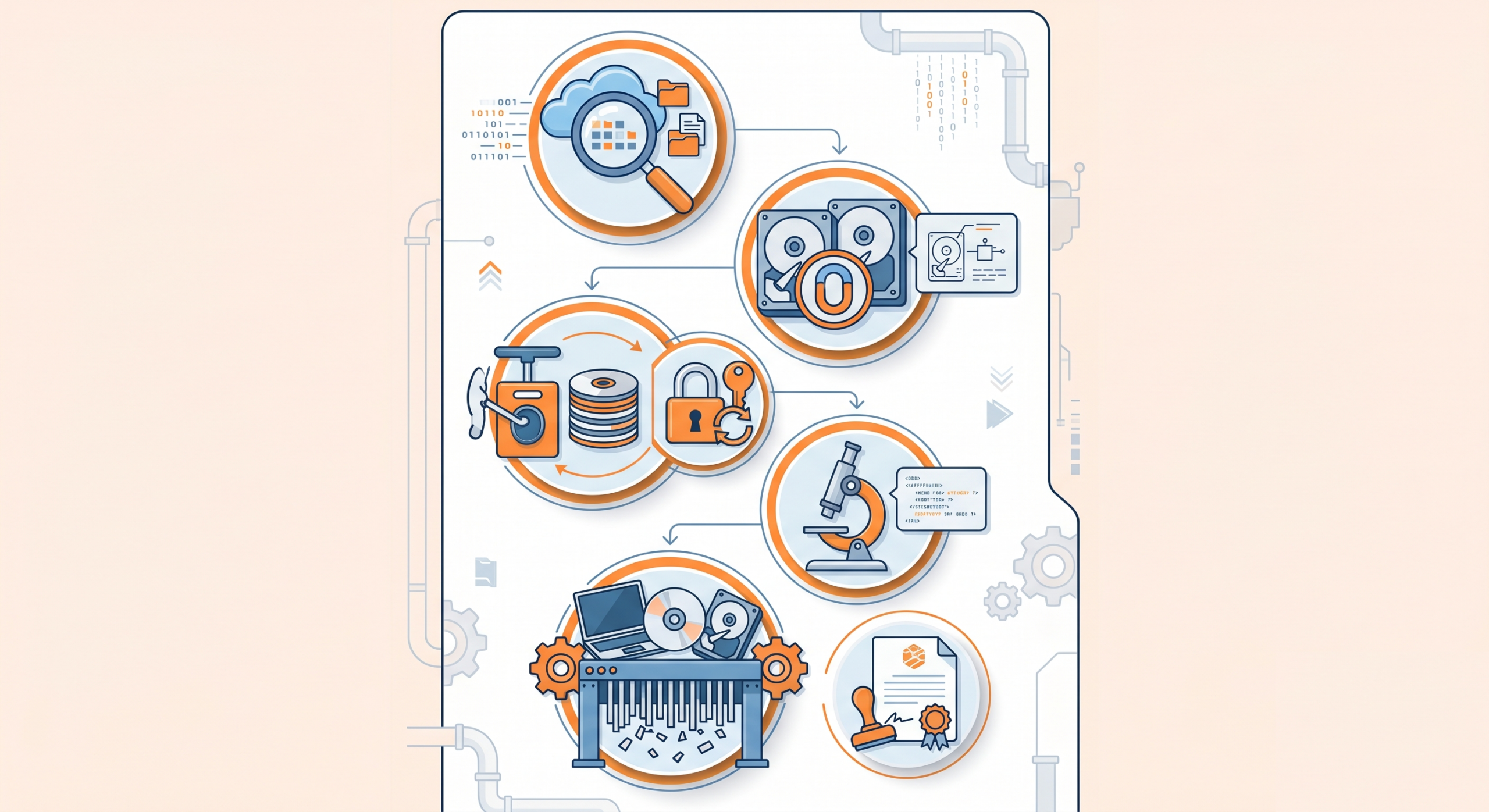

Choosing the Right AI Strategy

Not all AI is the same, and not every problem needs the same kind of solution. The first step is matching the type of work you want to improve with the right AI approach.

For example, language-heavy tasks like classifying emails, extracting information from documents, summarizing notes, or searching internal knowledge bases typically rely on natural language processing. Visual tasks, such as inspecting images, scanning documents, or identifying defects or parts, require image recognition models. Forecasting and planning problems like demand forecasting, preventative maintenance, or staffing predictions use predictive analytics.

Each of these approaches relies on different AI models, data inputs, and computing requirements. Some are lightweight and can run efficiently on standard infrastructure. Others require more specialized deployment and monitoring. The key is understanding what kind of problem you’re solving before deciding how advanced the solution needs to be.

How to Evaluate and Select AI Models

Choosing the right model is less about technical prestige and more about operational fit. A model that looks impressive in a demo can fall apart in production if it doesn’t align with business realities.

When evaluating options, teams should consider:

- How much error the business can tolerate and where mistakes are acceptable or unacceptable

- Whether results need to be explainable or auditable

- How fast responses need to be in real workflows

- What the long-term cost per request looks like

- Whether the solution can be maintained without constant specialist involvement

The best model is the one that delivers consistent, predictable performance in your actual environment.

Building a Reliable AI Foundation

AI makes system building faster than ever, but that doesn’t mean leaving longevity in the past. Agile solutions start with a strong foundation.

Data Collection and Preparation

AI systems are only as reliable as the data they’re built on. In most organizations, data exists, but it’s often fragmented, inconsistent, or shaped by years of manual processes.

Strong AI systems start with disciplined data practices. That includes auditing data for missing values and inconsistencies, establishing clear rules for handling sensitive or regulated information, and ensuring data is collected in ways that protect privacy and access controls. When labeling is required, consistency matters more than perfection.

Repeatability is critical. If your team can’t explain where data came from, how it was transformed, and which version was used, results will be difficult to trust or reproduce later.

AI Tools, Frameworks, and Tech Stack

From a tooling perspective, most teams rely on mature ecosystems that include core machine learning frameworks, data pipeline tooling, and production monitoring systems. Many are built on open-source foundations, which can offer flexibility and reduce vendor lock-in.

Beyond training models, teams need tools that support experiment tracking, dataset versioning, model registries, and production logging. These make AI systems supportable over time.

Security must also be part of the stack from day one. That means evaluating vendors carefully, designing access controls intentionally, and planning for compliance requirements early. The best stack isn’t the most cutting-edge one. It’s the one your organization can operate reliably without constant firefighting.

Turning AI Into Operational Software

Code Generation and the Developer Workflow

AI has changed how software teams work, but it hasn’t replaced the need for experienced engineers. Used correctly, AI tools help developers move faster by reducing time spent on repetitive coding tasks, providing starter drafts, and suggesting refactors or improvements.

What hasn’t changed is responsibility. Humans still own architecture decisions, security considerations, edge cases, and final code review. AI-generated code needs the same testing, review, and version control discipline as any other contribution.

When teams treat AI as an assistant instead of an autopilot, they gain efficiency without sacrificing quality.

AI Agents and Automation

An AI agent is a controlled mechanism that can take limited steps toward a goal, such as gathering context, proposing an action, or executing within defined boundaries.

In operational software, AI agents can be useful for drafting responses, validating data entry, monitoring workflows, or escalating exceptions. The value comes from reducing manual effort while keeping humans in control.

The difference between helpful automation and risky automation is governance. Clear permissions, approval steps for sensitive actions, logging for traceability, and safe fallbacks are what allow teams to automate routine work without introducing chaos.

Operating AI Over Time

Monitoring, Maintenance, and Governance

Once AI is live, it becomes part of your production environment and needs the same level of care as any mission-critical system. That includes monitoring performance and costs, watching for degradation or drift, and maintaining clear visibility into how decisions are being made.

Documentation and ownership matter here. Without clear responsibility and ongoing attention, even well-built systems slowly decay. This is where disciplined project management and operational processes protect long-term value.

Security, Compliance, and Ethical Considerations

In industrial and operational settings, trust is earned through consistency and transparency. AI systems should be threat-modeled like any other production system, with safeguards for sensitive data and live operational feeds.

Where AI influences people—such as staffing decisions, approvals, or access—bias checks and audit logs become especially important. Governance isn’t about slowing teams down. It’s about making systems understandable, defensible, and safe to rely on.

Teams, Cost, and Planning Realities

Building and operating AI typically requires a mix of skills: AI developers, software engineers, data specialists, and product leadership to keep work aligned with business outcomes. Small teams often struggle when they try to handle everything at once, especially on top of existing systems.

From a planning perspective, AI work is inherently iterative. MVPs should focus on one workflow and one measurable outcome, with realistic expectations around learning and refinement. The commonly referenced “30% rule” is a useful reminder that a meaningful portion of effort will go toward experimentation and adjustment, not just straight-line delivery.

That upfront realism is what keeps AI initiatives on track and aligned with business goals.

Build AI Software You Can Rely On

AI can transform operations when it’s built on solid engineering and supported over time. Too many teams rush to add AI-powered features, lean too hard on generative AI, and then get burned by reliability gaps, weak monitoring, or unclear ownership. The result is an expensive tool that no one trusts, and a workflow that quietly slips back to spreadsheets and manual work.

NorthBuilt helps independent Midwest businesses develop AI software that fits real workflows, integrates cleanly with existing portals and internal systems, and stays dependable long after launch. Whether you’re adding generative AI to reduce manual admin work, modernizing legacy apps, or building durable AI-powered solutions that your team can run with confidence, we focus on stability, security, and long-term value.

If you’re ready to build AI software to last, book a call with NorthBuilt and let’s map the smartest next step.

How do I develop my own AI software?

At a high level, to develop AI software:

- Define a specific operational problem and success metric

- Audit and prepare data (and label it if needed)

- Choose the right model approach (pretrained, fine-tuned, or custom)

- Prototype quickly to validate feasibility

- Build the surrounding application: UI, services, integrations

- Deploy in stages, measure results, and iterate

- Maintain it: monitoring, updates, retraining, and governance

Internal teams can succeed when they have strong engineering discipline and someone who can own the long-term maintenance.

What software is used to develop AI?

Teams typically use:

- ML frameworks and libraries for training and inference

- Data pipeline and versioning tools

- Deployment tooling for model serving and monitoring

- Security and governance controls for production use

The specific stack depends on your environment, your constraints, and how you need to integrate the AI into existing systems.

Chris Morbitzer

Chris Morbitzer is CEO and co-founder of NorthBuilt, a Minnesota-based software development partner that helps independent manufacturers, agricultural companies, and industrial services firms across the Midwest implement AI and build practical technology solutions.